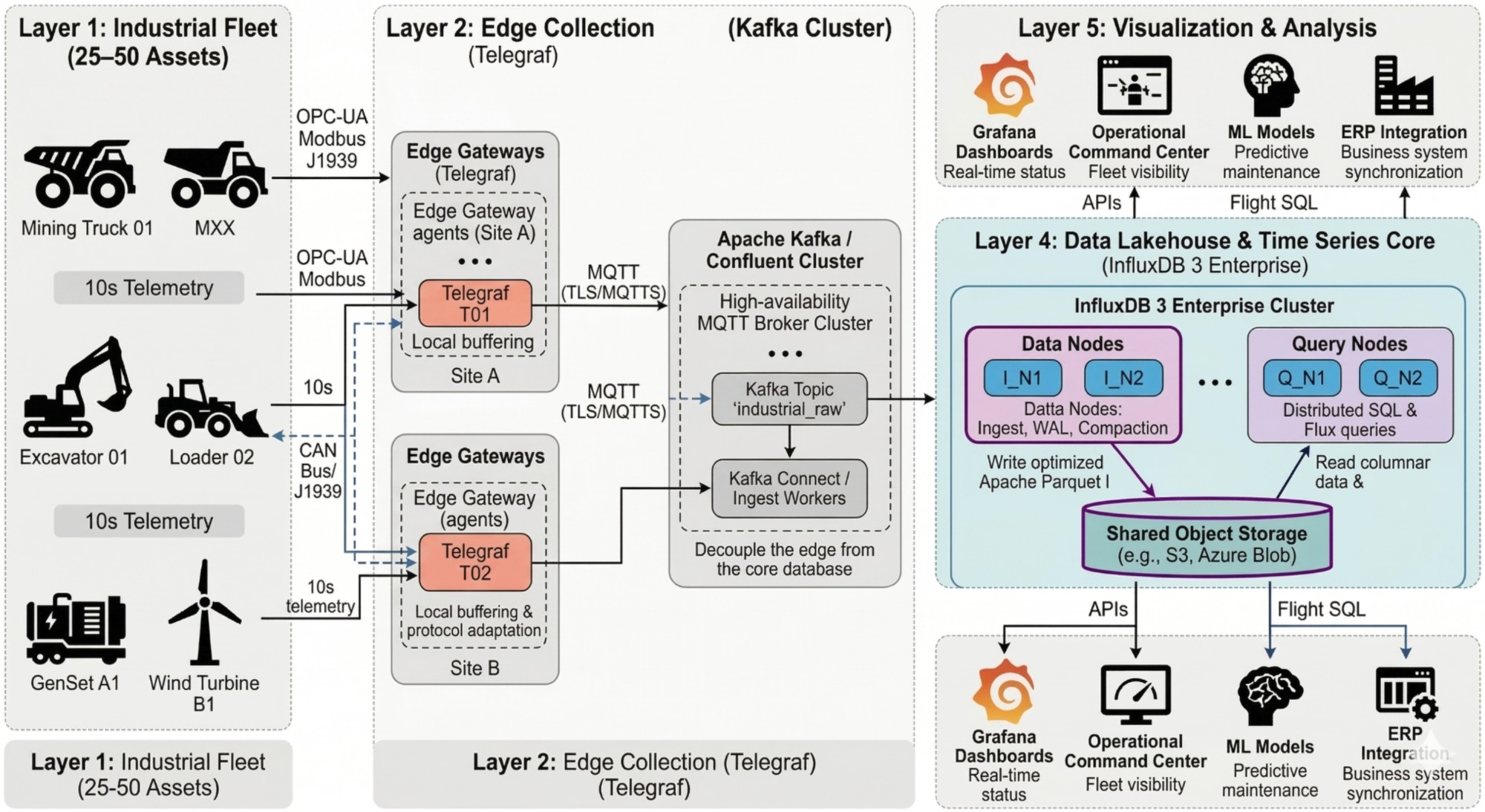

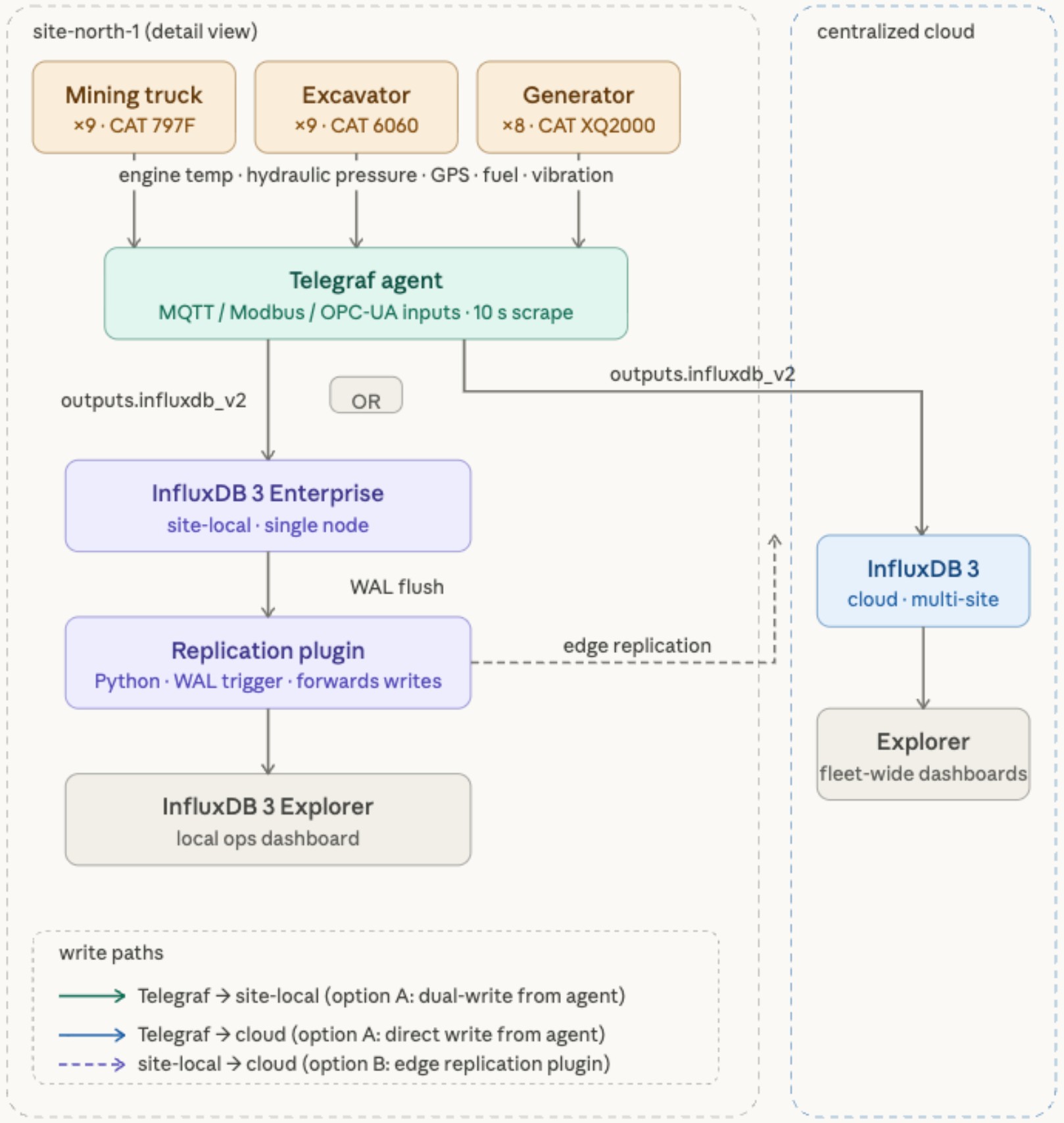

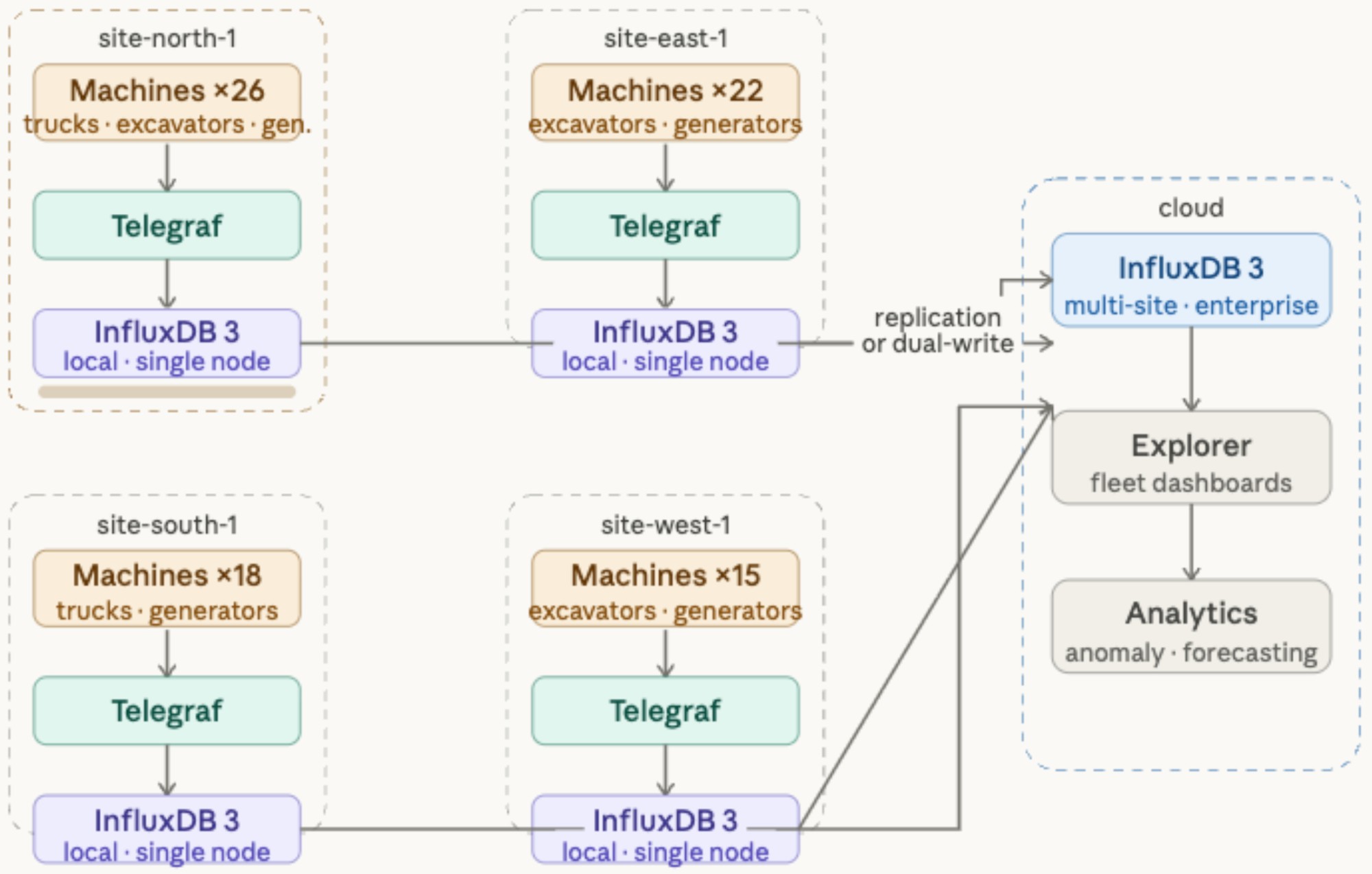

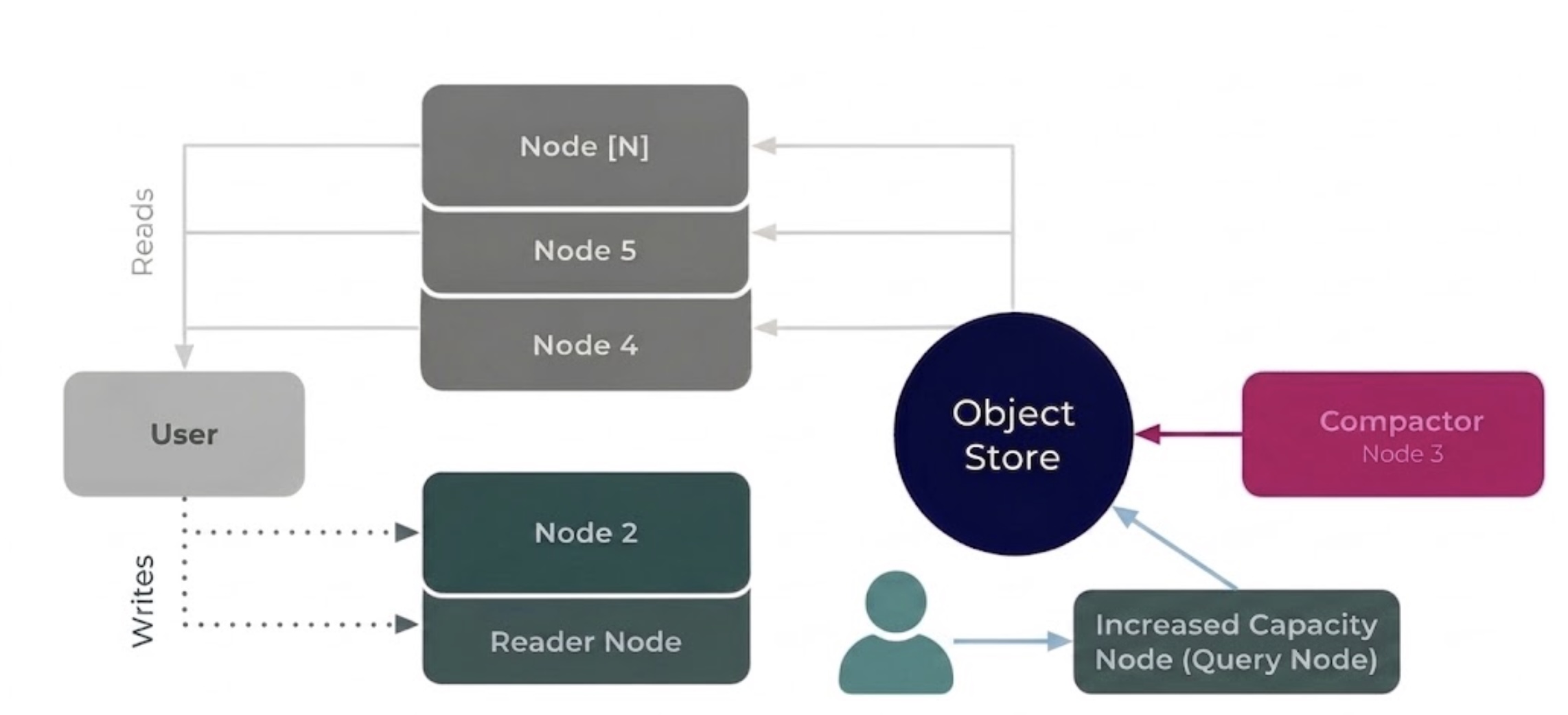

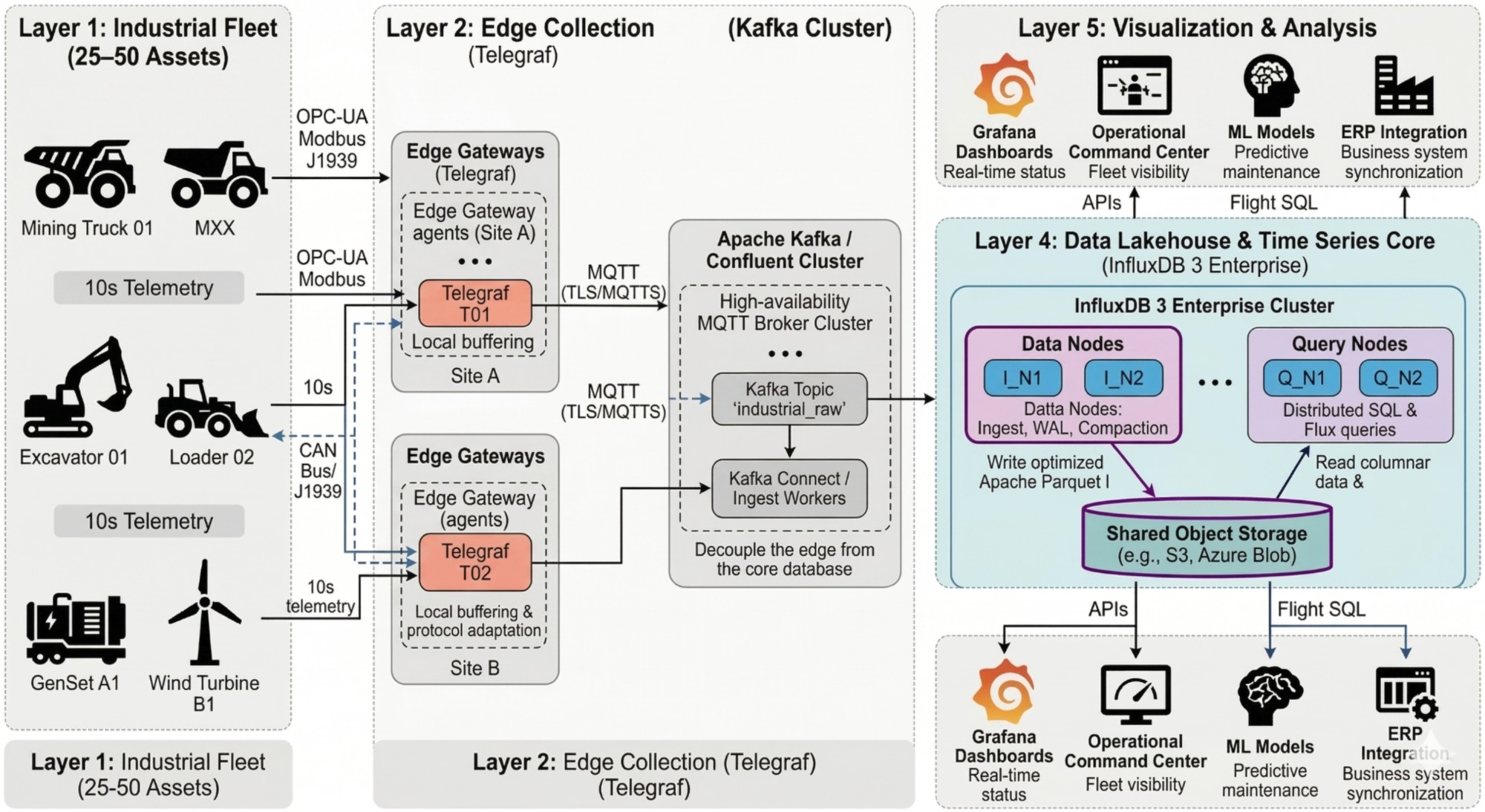

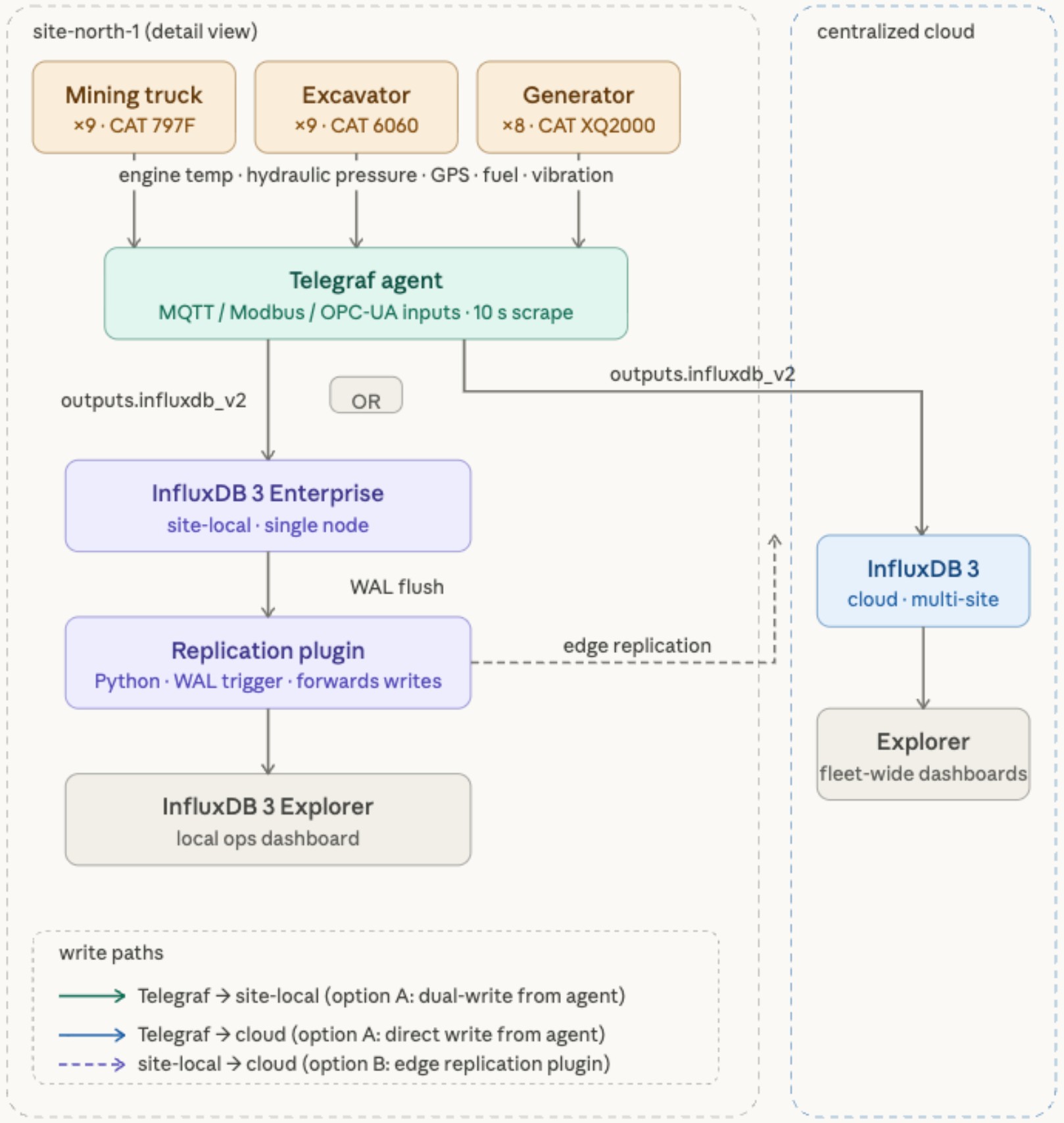

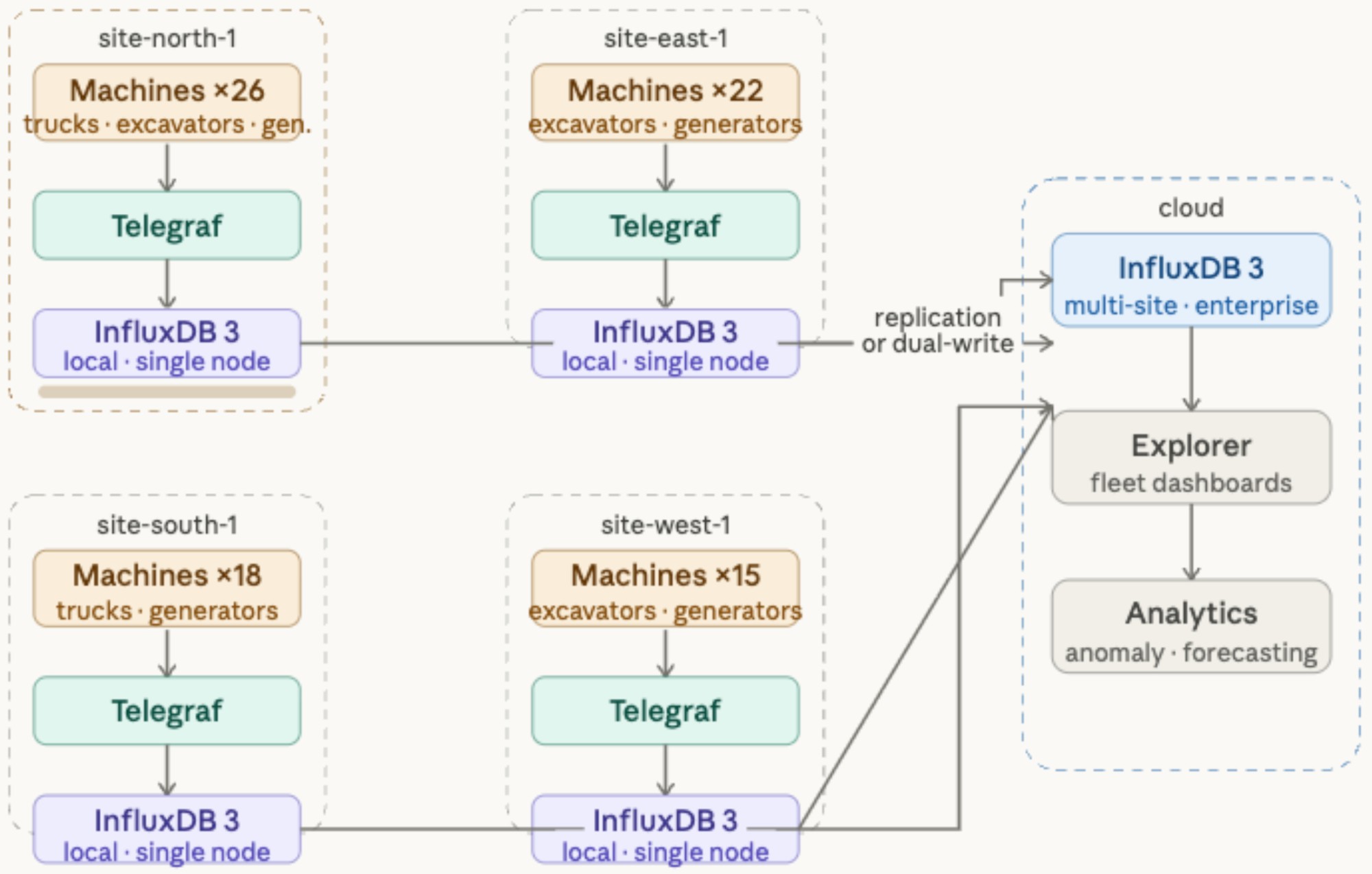

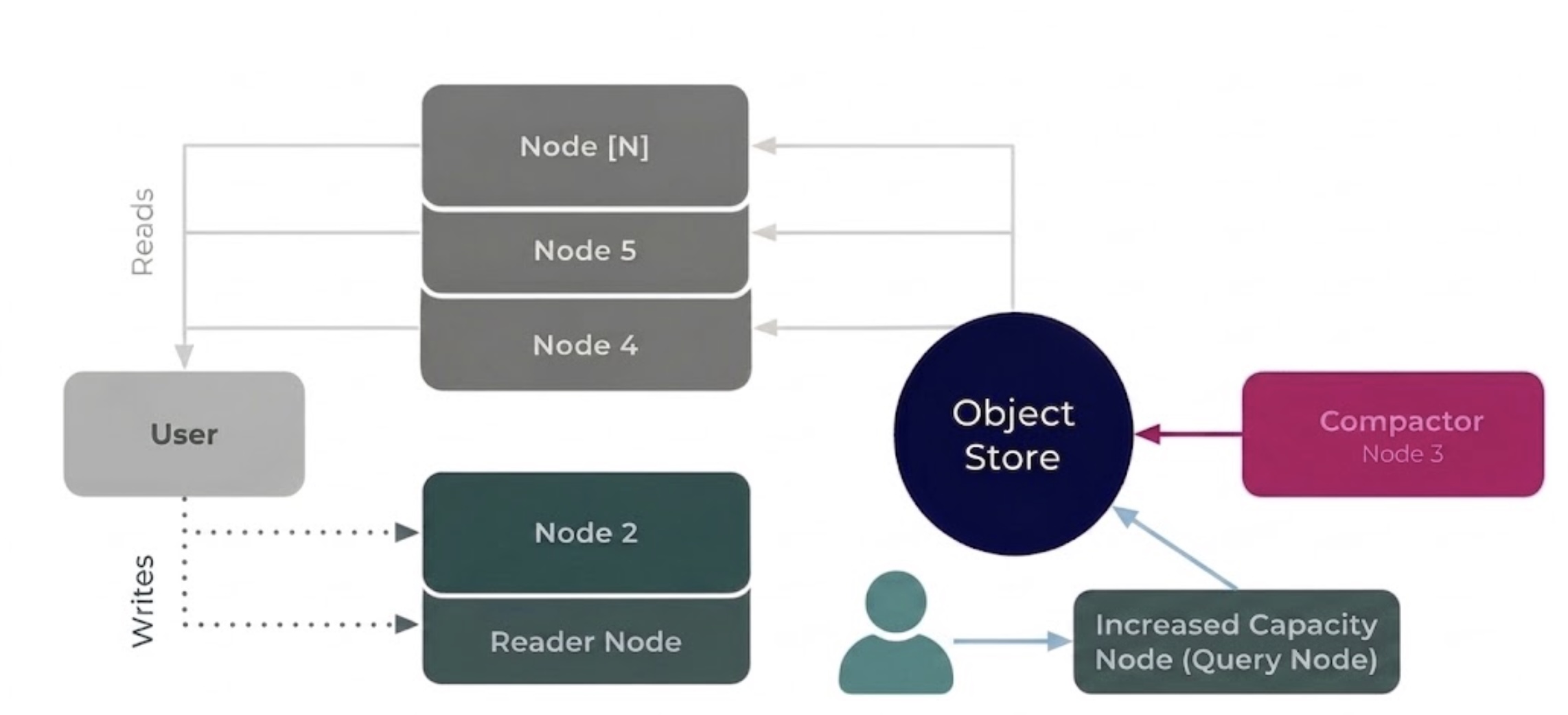

Reference architecture

End-to-end data flow from industrial assets to fleet dashboards, built on InfluxDB 3 Enterprise.

Caterpillar's fleet generates billions of data points a day. CatWork gives you automated retention, elastic scaling, and real-time telemetry — so your engineers can build, not firefight.

Manual retention scripts and over-provisioned infrastructure are draining elite engineering talent and budget.

Migrate to InfluxDB v3 with CatWork and eliminate both problems with purpose-built architecture.

End-to-end data flow from industrial assets to fleet dashboards, built on InfluxDB 3 Enterprise.

Toggle v1 → v3 to see the visual impact across every site. Click any site card for detail.

Hover any site pin to inspect status, storage, and latency. Toggle v1 → v3 to see migration impact.

12 surge events per year, 48 hours each. Click any month to explore the workload queue and v1 vs v3 impact.

Adjust the sliders to model your fleet's cost and time savings.

From edge ingestion to global dashboards, built on InfluxDB v3.

See how CatWork modernizes Caterpillar's fleet data platform — saving $1.78M a year and 1,000+ hours of engineering time.